This is an ongoing experiment to test and learn about different ways to monitor Slurm HPC clusters built with AWS ParallelCluster; I will keep this page updated, and if there is interest, we can publish the repo with terraform plans and lambda helpers that we use for the current implementation.

Questions or comments? Feel free to reach out directly at dag@bioteam.net.

Parallelcluster Monitoring Pain Points

Not all HPC errors are user or job-related, especially on AWS, where computational resources are often dynamically created with a lot of “invisible” AWS services orchestrating in the background.

Scientific end-users don’t have visibility into HPC cluster problems involving infrastructure or AWS issues such as vCPU quota or capacity issues. Instead, users are presented with error responses like this:

ubuntu@login:~$ srun -p s-mgpu-48c-192g-3t –pty /bin/bash -i

srun: error: Node failure on s-mgpu-48c-192g-3t-dy-g612xl-1

srun: error: Nodes s-mgpu-48c-192g-3t-dy-g612xl-1 are still not ready

srun: error: Something is wrong with the boot of the nodes.

Compounding this issue is that sometimes transient issues become semi-permanent issues requiring human intervention to resolve and repair. AWS ParallelCluster will proactively place a cluster or Slurm partition into a special “Protected Mode” status that can ONLY be cleared manually by an HPC administrator when certain failure counts exceed a configurable threshold. The default threshold seems to be 10 before protected mode is triggered.

This means that even “transient” AWS issues, like temporary EC2 capacity issues in a regional AZ that clear over time, can still result in an unusable Slurm partition until an administrator clears them manually.

Example

Here is a recent example from a cluster that was undergoing saturation load testing. Several of the Slurm partitions on this cluster can scale up to 256 compute nodes. By default there are zero compute nodes as they are all dynamically created only when jobs are pending.

Although the AWS account in question had ample EC2 vCPU quota to run far more than 250 concurrent servers, AWS was unable to fulfill the fleet creation request because it had no additional capacity at the time the tests were being run.

As a result, after 10 consecutive failures to create new compute nodes, the cluster was placed into Protected status and the Slurm partitions were marked as down/inactive, rendering them unable to run any jobs at all.

What the scientific end-user sees:

ubuntu@login:~$ sinfoPARTITION AVAIL TIMELIMIT NODES STATE NODELIST

default-q* up infinite 1 idle~ default-q-dy-c62xl-1

default-q* up infinite 1 idle default-q-st-c62xl-1

s-2c-4g-118g up 3-00:00:00 20 idle~ s-2c-4g-118g-dy-c6l-[1-20]

d-2c-4g-118g up 3-00:00:00 20 idle~ d-2c-4g-118g-dy-c6l-[1-20]

s-4c-8g-474g up 3-00:00:00 20 idle~ s-4c-8g-474g-dy-c62xl-[1-20]

d-4c-8g-237g inact 3-00:00:00 240 idle~ d-4c-8g-237g-dy-c6xl-[1-7,9-11,13-19,21-52,54-55,57-66,68-144,146-147,149-151,153-177,179-189,191-202,204-205,207-228,230-233,235-251,253-256]

d-4c-8g-237g inact 3-00:00:00 16 down~ d-4c-8g-237g-dy-c6xl-[8,12,20,53,56,67,145,148,152,178,190,203,206,229,234,252]

d-4c-16g-237g inact 3-00:00:00 229 idle~ d-4c-16g-237g-dy-m6xl-[1-20,22-41,43-71,73-82,84-105,108-113,115-120,122-128,130,132-148,150,153-154,156,158-170,172-183,185-189,191,193,195-197,199-214,217-218,220-235,237-245,247,249-256]

d-4c-16g-237g inact 3-00:00:00 27 down~ d-4c-16g-237g-dy-m6xl-[21,42,72,83,106-107,114,121,129,131,149,151-152,155,157,171,184,190,192,194,198,215-216,219,236,246,248]

d-8c-61g-2t up 3-00:00:00 20 idle~ d-8c-61g-2t-dy-i32xl-[1-20]

d-12c-96g-7t up 3-00:00:00 20 idle~ d-12c-96g-7t-dy-i33xl-[1-20]

d-16c-128g-300g up 3-00:00:00 10 idle~ d-16c-128g-300g-dy-r54xl-[1-10]

s-gpu-4c-8g-250g up 3-00:00:00 10 idle~ s-gpu-4c-8g-250g-dy-g6xl-[1-10]

s-mgpu-48c-192g-3t up 3-00:00:00 2 idle~ s-mgpu-48c-192g-3t-dy-g612xl-[1-2]

d-gpu-4c-8g-250g up 3-00:00:00 10 idle~ d-gpu-4c-8g-250g-dy-g6xl-[1-10]

d-mgpu-48c-192g-3t up 3-00:00:00 2 idle~ d-mgpu-48c-192g-3t-dy-g612xl-[1-2]

d-128c-256g-7t up 3-00:00:00 1 idle~ d-128c-256g-7t-dy-c632xl-1

d-48c-96g-3t up 3-00:00:00 2 idle~ d-48c-96g-3t-dy-c612xl-[1-2]

s-128c-256g-7t up 3-00:00:00 1 idle~ s-128c-256g-7t-dy-c632xl-1

s-48c-96g-3t up 3-00:00:00 2 idle~ s-48c-96g-3t-dy-c612xl-[1-2]

That is what the user sees — Slurm partitions that are down and unusable.

What IT, HPC and Cloud Admins can see (if they know where to look!)

The “reason” for the inactive Slurm partition is buried inside one of the many parallelcluster log files created on the HeadNode and streamed into a Cloudwatch Log Group if that setting is enabled:

2026-01-23 18:06:34,184 - [slurm_plugin.clustermgtd:_handle_protected_mode_process] - WARNING - Cluster is in protected mode due to failures detected in node provisioning. Please investigate the issue and then use 'pcluster update-compute-fleet --status START_REQUESTED' command to re-enable the fleet

Why this is embarrassing

As someone who has spent his entire career supporting scientists working on data-intensive life science infrastructure, it’s always embarrassing when end users notice a problem before IT does. These are the IT problems I like to get ahead of when I can, because it never feels good when the first sign of a problem is end users being inconvenienced or pipelines grinding to a halt.

ParallelCluster is great at Monitoring but it does not Alert humans by default

I love ParallelCluster, it gets better with every release. It’s been great to see how much the Cloudwatch Dashboards, log groups, composite alarms and metrics have improved over time. Every cluster I deploy has monitoring turned on, although with a very conservative retention time because Cloudwatch Log Groups that never expire are financially wasteful when not actually needed for compliance, audit or regulatory reasons.

My cluster config files usually contain this Monitoring block:

Monitoring:

Logs:

CloudWatch:

Enabled: true

RetentionInDays: 7

I find that seven-day retention is reasonable for most use cases, it’s rare for me to want anything older than seven days. Beyond seven days I usually only care about Slurm accounting logs, not cluster log files.

To see what AWS ParallelCluster does for you by default, review this URL: https://docs.aws.amazon.com/parallelcluster/latest/ug/monitoring-overview.html.

Out of the box you get the following:

- All of the interesting logs streamed into an organized format under a per-cluster Cloudwatch Log Group

- A custom Cloudwatch Dashboard for each cluster with easy access to log streams, metrics and alarms

Image source: https://docs.aws.amazon.com/images/parallelcluster/latest/ug/images/CW-dashboard.png

The things I’m most interested in seeing, however, are sort of hidden away as composite metrics that are graphed:

Each one of those graphed composite items is something that I, as an HPC support person or cluster operator, would like to know about, ideally as soon as possible. I am very interested in:

- Instance Provisioning Errors

- Unhealthy Instance Errors

- Custom Action Errors

My ParallelCluster Monitoring/Alerting Goal

- Learn about ParallelCluster issues before end-users report them

- Real-time or near real-time alerts for the following HPC cluster or compute fleet conditions

- Cluster enters PROTECTED MODE state for any reason

- Compute fleet enters STOPPED state for any reason

- Bootstrap failures when using s3:// scripts configured as part of an OnNodeBoot CustomAction

- Node creation issues originating from the AWS EC2 service due to

- Insufficient Capacity errors

- vCPU quota limit breached

Turning Monitoring/Alerting Goals into Terraform

Step 1 – SNS Topic

The first set of resources we need to create is the standard design pattern for receiving messages/alerts and allowing different consumers to subscribe to those messages — an AWS SNS Topic. We have two different subscribers to the SNS Topic we created:

- Standard email subscribers who will get email each time a message is delivered to the Topic

- A simple Python Lambda function is also subscribed to the SNS Topic. The purpose of this lambda function is to receive the message, format it for better readability and deliver it to a Slack Channel via a webhook HTTPS address

Step 2 – Cloudwatch Metric/Alarm Pairs and a Cloudwatch Subscription Filter

I had to break my monitoring setup into two different styles of monitored conditions:

- Simple conditions where basic “ALARM” or “OK” status was sufficient, with no additional contextual details needed.

- More complex conditions where I wanted to send detailed information extracted from JSON-formatted log entries into the Alert Message

For the simple conditions the well established Cloudwatch Metric / Cloudwatch Alarm pairing is sufficient. We create a new Metric, set the default value to 0 and then scan the log group for patterns we care about. When the pattern is found we use the Sum function to add 1 to the value of the metric. For the paired Alarm it is even more simple — the alarm is in OK status when the paired metric value is 0 and in ALARM status when the paired metric value is >= 1.

Whenever the Alarm is triggered we notify the SNS Topic and whenever the Alarm clears back to OK status we also notify the SNS Topic.

This simple “Alarm” and “OK” status works great for two core conditions I want alerts on:

- Cluster in PROTECTED mode

- Compute fleet in STOPPED state

However, there are more complex things I want to monitor and most importantly I want to extract data from the log entries and send that information into the SNS Topic for delivery to my Slack channel or email inbox. We can’t use Cloudwatch Metric Filters for that.

To handle the more complex scenario, we use CloudWatch Subscription Filters, which enable real-time monitoring and (even better) forward the contents of log messages to various AWS services for downstream handling and processing. This involves:

- Creating a Subscription Filter that looks for JSON-formatted log messages

- When detected, the Subscription Filter sends the log payload to a lambda function that parses the JSON log entry, makes a more “human readable” summary, and then forwards that on to the SNS Topic for delivery to the email inbox or Slack channel.

This is what it looks like as a simple architecture diagram:

The image gallery below shows what the detection of “PROTECTED MODE” status looks like in both the AWS Cloudwatch console and the Terraform code that creates the Metric Filter and Alarm.

It’s very simple and cheesy — you will note that we are filtering for a simple pattern:

%WARNING - Cluster is in protected mode%

What an Alarm announcement looks like in slack

It’s just a simple “ALARM” or “OK” status with some extra information about the environment and alarm description added in by the lambda that takes messages from the SNS Topic and sends to the Slack webhook URL:

Handling the more complex error conditions

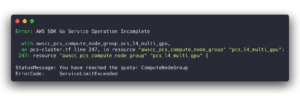

For anything related to node bootstrap errors, vCPU quota errors or errors thrown by AWS for insufficientCapacity we need to be a bit more sophisticated in how we handle things. This is because we (ideally) want to extract contextual information from the log entry itself and send that along into the SNS Topic.

This is where CloudWatch Subscription Filters come in — their primary purpose is to forward log data to various AWS services for downstream processing.

The good news is that, when looking at ParallelCluster error logs, I noticed that for almost everything I care about, ParallelCluster generates a beautiful, fully loaded JSON error message with great detail.

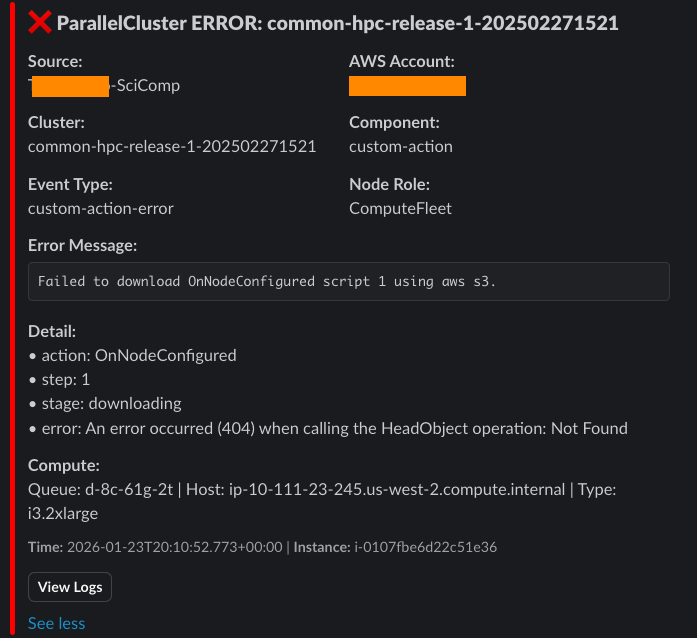

Here is an example error message from a compute node bootstrap failure when the IAM Instance Role on the node did not have permission to download the bootstrap script from the S3 bucket:

That is a FANTASTIC error log entry! It tells us exactly where the error happened (during a CustomAction OnNodeStart event) and what happened (a permission denied on an S3 download attempt …). Getting this level of detail into our Alert message is essential, as it saves tons of time by eliminating the need to scan log files for “what went wrong …“.

This is also really straightforward to set up. We create a Cloudwatch Log Group Subscription Filter that also looks for a simple pattern matching the JSON payload that ParallelCluster commonly logs.

And whenever we hit that pattern we send the payload to a python lambda function that reformats the JSON log entry into a prettier more human-readable message that then gets delivered to the SNS Topic responsible for delivering messages to email inboxes and the Slack webhook URL

Subscription Filter Design Attempt 1 (ERROR messages only)

{ $.level = \"ERROR\" }{ $.level = * }{ $.level = * }# ignore logs where event-type = "compute-node-idle-time"

if event_type=='compute-node-idle-time':

print(f"Skipping event-type: compute-node-idle-time")

continue

What the “complex” Alert message looks like in slack

The value here is that we go beyond simple “ALARM” and “OK” status. By using a Cloudwatch Subscription Filter and a lambda to process the log and turn it into a formatted message we get much more useful and actionable alerts.

The alert below clearly shows that a compute node failed to start because the configured path to the S3:// hosted bootstrap script had a typo in it, resulting in a “404 not found” error when the download was attempted.

Thanks for reading this far!

If you have any questions, comments or feedback feel free to reach out to me at dag@bioteam.net.